PART I

When you think about it, the evolution of language is a compelling topic for literary folks, and ought to be required study for literary critics. People have an innate capacity for language. The neurological center—what we might by analogy call the cellular “processor”—lies in an organized nucleus of cells in that part of the brain right behind your left ear. Language is not a town-made capacity: it is hard-wired in, as are the other senses, such as eyesight, for example. Our vision has evolved to detect a useful, finite spectrum of electromagnetic radiation emanating from the outside world. Using eyesight, we can detect important things out there: I can see the prey I want to kill and eat, notice the vegetable world from which to select edibles, ogle the other members of my species with whom I long to mate.

There are those of us human beings, of course, who have preferred to mate with other species than our own. The example of shepherds lying with their sheep is Biblical in scope, and I myself have known a particular farmer who would have sex with one of his cows. The give-away was the animal hair and fecal matter spread all down the front of his overalls. And as I recall, Governor Winship in the Plymouth Colony hung one of the original pilgrims for having sex with a turkey. They also hung the turkey, which is sadly, grimly humorous. Those first pilgrims meant business.

With all this acknowledged, no one would say that the interspecies sex was a consequence of poor eyesight. They could see what they were doing, make selective choices among alternative beings in the world—because their capacity for vision referred to a material world existing outside of their mental activity.

You can maybe imagine language acting in a similar way. Spear in hand, you and your companion are out hunting for a wooly mammoth to kill, when the guy beside you abruptly yells ‘Run!” or something similar. In this way language might be immediately useful, multiplying the scope of the other senses, which have also evolved to respond to environmental events. The immediate assumption might be that the language has expressed the need for intelligent, discriminant behavior, quickly executed in the material world, in response to changing material conditions. It wouldn’t do, for instance, to run toward the source of threat—and in fact, if your companion took the necessary time to do the thing rightly, he might yell “Run from the charging mammoth directly to our right.”

The immediate assumption might be that the eyesight detected something in the environment to which the imperative linguistic product referred—and referred as well to the speed of the approach, to the direction from which it was advancing, and perhaps even to the intended mayhem that the advance suggested.

Those philosophically minded hunters for whom language did not refer to any referent, for whom no real ‘signified’ existed behind the ‘sign’, might prefer to deconstruct the etymology of the verb ‘run’, to quibble with the definition of ‘mammoth,’ or to be concerned about the inaccuracy of the word “right.’ However, that misconcept of language would carry its own sad correction, and our brainy hunter would not live to reproduce either with his own species, or with any other preferred choice.

These days, those philosophically minded hunters roam through many university literature departments—where they are also about to become extinct, I fear. But that is the subject of another conversation.

PART II

“ If I now tell you that my old dog, with his few sad last grey hairs, is sleeping by my woodstove, I trust you would not come into my house expecting to find an elk, and that if you did, I would be justified in believing you were fucking weird and never letting you near my dog again.”

I have already asked you to imagine yourself as a neolithic hunter roaming around, spear in hand, and using language to negotiate dangers originating in the natural, unconstructed world. This time I’d like to imagine something a bit more probable: that we are contemporary neuroscientists. As such, we can acknowledge our incredulity at post-modern language theory—because we are starting with a different concept of evidence, and indeed with a different conceptual pedigree entirely. As scientists we are looking at the neurological bases of behavior, the source of which is an organ—the brain—that has evolved over immensities of time, in response to uncountable numbers of environmental interactions, so that its capacities are determined according to its fit in its material niche. There are other niches, but we do not fit in them: for instance, we cannot breathe too good under water, we cannot eat bamboo for any length of time and survive, we cannot in arid places go for months without water. It is up to other animals to fill those niches.

We inhabit the niche we are designed to inhabit—which makes good tautological sense. I have more to say about this topic, but because I am at heart a shy and modest person, and so do not want to flash my naked, unseemly nerdism, I have provided links to brief lectures: one regarding the neurological areas in the brain responsible for language (http://www.youtube.com/watch?v=fFGmCRc0njk); the other regarding a neuroanatomical area that coordinates our mental and physiological rhythms—called circadian rhythms—with the cyclical presence and absence of sunlight (http://www.youtube.com/watch?v=43E6Q7a8X68).

What these links will do is provide some evidence—as well as further links to the world of other related evidence—that I am not just making this shit up. The entire worldwide community of neuroscientists believes from vast experimental evidence that, down to the most intimate neurophysiological degree—down into our very cells—, we are tied to events in the natural world around us. And language, as a neurologically wired capacity, is a feature of that linkage. As thinking, speaking human beings, we are as totally synched to the events in the natural world as our iPhones, Droids and iPads are synched to our computers.

Post Modernism has a briefer pedigree: perhaps if we stretch things we can extend it back to Kant and his belief that the noumenon cannot be understood, but we might all feel more confident with a less ambitious lineage extending from Nietzsche through Husserl and Heidegger into Levinas, Barthes and Derrida, then forward to the current intellectual heirs. This is a Continental heritage, and works most persuasively with Continental languages. The way in which Kanji, for instance, purports to refer to its signified clearly works on principles that are not well-characterized by Western examples.

But even with the continental tongues, the referent to which a sign points is not commonly in question. If, for instance, I now tell you that my old dog, with his few sad last grey hairs, is sleeping by my woodstove, I trust you would not come into my house expecting to find an elk, and that if you did, I would be justified in believing you were fucking weird and never letting you near my dog again. Some of you might even catch the intentional allusion to Keats. Further, if we were honest among ourselves, we would recognize that the books and articles Derrida has written were published with the particular intent to communicate his ideas, regarding which he worked with discernible effort to convey accurately. If you happened to attend one of his lectures at the University of California, Irvine, where he taught in his later years (and from which, I blush to confess, I graduated) you could have enjoyed his personal, extended, elegant use of language as it was classically conceived—and even ask in interrogative sentences what he meant by the ‘trace’ that language unearths.

Part III

“In which Postmodern Despair is Vanquished, and We Can Return to our Universities and Teach Poetry.”

My point—my purpose in making my previous observations is this: there is a disconnection between language as it is now philosophically conceived in postmodern discourse, and language as it is commonly used—even among the philosophers themselves. When Derrida and the murmuration of his followers reduce meaning solely to the relationship inhering between the sign and the signified—the noun and its referent—-they are omitting the vast majority of linguistic functions. Accordingly, they have imported a reductivist platform that is being made to stand for the whole, immense range of expressive uses. Just to pick one immediate literary example, when Marc Anthony at Caesar’s funeral keeps repeating his observation, “And sure, Brutus is an honorable man,” the meaning of that phrase—understood by all who hear it—has nothing to do with the literal referent.

The unpublished intention, implicit in the sign/signified postulation, is to introduce an unacknowledged axiom: that the true purpose of language is to reveal the ontologically real. The postmodern formulation tacitly asserts that language is not conceived for quotidian uses (“While you’re out, will you bring me home a portabello sandwich from the Black Sheep deli?”) or for poetical, non-referential pleasures (“The world is blue like an orange.”). The essential, defining purpose of language is as a tool for the contemplative mind to extract the unknowable “ding an sich” —in the performance of which, as we are told over and over, language fails.

Well, now, that purported failure logically follows only if we accept the reductive proposition that, first, language is merely a matter of nouns and referents, and that, second, its essential purpose lies in its philosophical discourse. However, we are not constrained, either by logic or by common usage, to accept either proposition. Shakespeare (see Marc Anthony above) along with just about every body else in the world has already discovered and published other useful propositions for language. Here, for instance, is one such provocative idea:

From 1991 until sometime in 2000, this image/symbol is the name of the rock star ‘formerly known as Prince’. As such, it seems to me to turn postmodernism inside out, in that we have a sign connected to its signified without the medium of language at all.

To choose another instance, here is one of Charlie Chaplin’s famous opinions on the matter:

http://www.youtube.com/watch?v=_du8fjUN0Kg

For Chaplin, language appears to be an expressive act—extended sequentially through time—that necessarily involves gesture, facial expression and tone of voice—all of which transcends the literal vocabulary, which in this particular instance is comprised of faux Italian*.

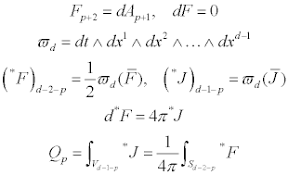

Of course, the ambiguity of language might in fact not be a function of all languages, but merely a feature of the Continental ones. For example, here is just a little of the mathematical language describing the physical reality of the twenty-six dimensional flat spacetime:

I admit that this is not a language that I find especially pertinent to how I live, but I do believe that this is the best language to be used by those men and women—those physicists—who are truly, successfully capturing the nature of the noumenon: the absolute physics of the universe.

If we do not commonly find among physicists the despair so often present in postmodernism, we also fail to locate individual differences in their mathematical language that will allow for particular people to identify themselves. Math is a universal language. It is better able to control its meanings, but at the expense of human definition, for which French, German, English—indeed virtually every other language is far better suited, even though that individuation necessarily introduces ambiguities. What I mean when I articulate a thought is not always reliably grasped in its full import by my partner in conversation. My differences introduce ambiguity into expression. I am other than you are, and what I mean—the shades of purpose I convey, the tenor of my voice, the pacing I choose—is individually mine.

It is exactly this individuality against which philosophy has protested. And it is this protest that I, in my turn, would want to revalue. I am far from equating linguistic ambiguity with the despair of failed significance that we find everywhere lamented in postmodernism. I would argue instead that ambiguity—precisely because it prevents material control and the successful exercise of power—is a joyous escape from convention, the delight in play, the opportunity for humor, the wonder of the unexpected, the nature of hope.

Know what I mean?

*Here is the text of Chaplin’s Song:

Se bella giu satore

Je notre so cafore

Je notre si cavore

Je la tu la ti la twah

La spinash o la bouchon

Cigaretto Portabello

Si rakish spaghaletto

Ti la tu la ti la twah

Senora pilasina

Voulez-vous le taximeter?

Le zionta su la seata

Tu la tu la tu la wa

Sa montia si n’amora

La sontia so gravora

La zontcha con sora

Je la possa ti la twah

Je notre so lamina

Je notre so cosina

Je le se tro savita

Je la tossa vi la twah

Se motra so la sonta

Chi vossa l’otra volta

Li zoscha si catonta

Tra la la la la la la